I’ve haven’t had pleasure to design a solution based on AFF A700 platform yet, maybe one glorious day… Quite often performance / capacity requirements can be fulfilled with lesser platforms like AFF A300 or even AFF A200.

Since NetApp is both scale up and scale out solution many non-metrocluster setups requiring performance and/or scale of single AFF A700 HA-pair, could also be built by using multiple AFF A300 / AFF A200 HA-pairs. With Metrocluster scale out capability is more limited with options for 2-node, 4-node or 8-node setups, so MetroCluster might be one use case where using AFF A700 is more likely.

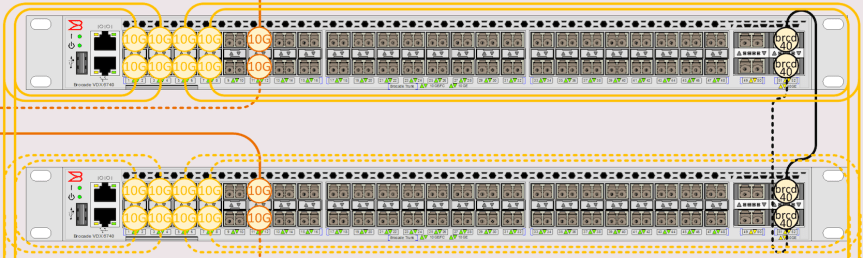

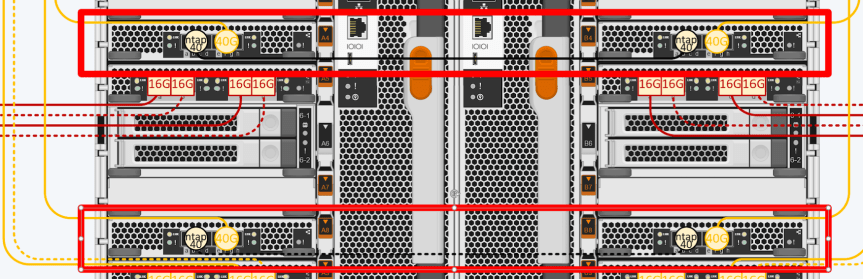

To get more familiar with AFF A700 platform I decided to make this drawing. This gave me also opportunity to test my new V2 AFF A700 / FAS 9000 stencils.

Lessons learned:

Both Atto FC ports can be used

It is now supported to connect both FC ports on Atto Bridges to provide more bandwidth to disk shelves. Additionally I’ve used quad-path SAS cabling for shelf-to-shelf connections.

Using two FC links per Atto requires slight tuning to my Atto and FC Switch stencils. Pre-populated port list used are not built to accomodate this. I’ve manually edited the port list in the sample drawing for the Back-End SAN switch. I will update stencils later to contain these port lists

Also port assingment box for Atto bridge was not built to accomodate Quad-Path SAS cabling. Only dual-path SAS cabling information is displayed.

Quad-Port FCVI Adapter

FCVI Adapter with AFF A700 and FAS 9000 is a four-port adapter (as opposed with lesser platfroms where FCVI adapter is dual-port adapte)r. This gives more bandwidth to FCVI connections (used to mirror NVRAM content from site-to-site).

40GbE Adapters and Cluster Interconnect links

By default AFF A700 / FAS9000 platform uses 40GbE links for Cluster Interconnect connectivity. It is supported to use a single 40GbE adapter per controller, but I’ve decided to make more resilient configuration by using two 40GbE adapters per controller to protect against adapter failure.

But using only one 40GbE port toward Cluster Interconnect network. This leaves two 40GbE ports per controller for host side connections.

40GbE Adapters with AFF A700 platform are dual-asic adapters unlike 40GbE adapters used with AFF A700s platform, which are single-asic adapters. Single ASIC adapter limits total bandwidth available to the adapter to 40GbE. If both ports are used in 40GbE mode, you can only get 40GbE of throughput, even if the nominal combined speed of those ports is 2 x 40GbE = 80GbE. If you split a AFF A700s 40GbE adapter port into 4 x 10GbE connections, the other port on 40GbE adapter is disabled due to lack of available throughput.

With Dual-ASIC design used with AFF A700 40GbE adapter you will actually get full 40GbE bandwidth to both ports at the same time. This makes it also possible to use one port at 40GbE while the other one is operating in 4 x 10GbE mode with break-out cable. (as seen in the sample drawing, e4e,e4f,e4g,e4h & e8e,e8f,e8f,e8h ports).

I had to also update port lists for 10GbE switches to have e,f,g and h ports for other adapters than onboard ports. I will update ports lists next time I update switch stencils.

Downloads

Please visit NetApp Downloads page or use these direct links to PDF and Visio versions of the sample drawing

Sami – Love this page – 4 node a700 and metrocluster – but maybe i missed it … why the 20×15.3TB SSD 4x960GB SSD – why not all 15.3TB – thanks

LikeLike

Hi Joe,

Thanks for compliments. Smaller SSD drives are for root aggregates. Metrocluster requires dedicated root aggregate drives and there is no point using (and paying) larger capacity drives. Maybe I should update my drawings as there is no 4+20 combo shelves available in NetApp quoting tool. They have 6+18 shelves available. If using only one shelf per site, I would use Raid4 for root aggregates, i.e 1 data drive + 1 parity drive + 1 spare. Other option would be to use RAID-DP (1 data + 2 parity), but that would lead to problems when replacing failed drives. A 960 GB SSD drive would fail, Ontap would pick next best available spare to rebuild (15.3TB) and you would end up with a root aggregate with 2x960GB + 1×15.3TB drives. Also the RMA drive from NetApp would be 960GB and you would not receive a 15.3TB drive as a replacement. Sure you could manually fail the 15.3 TB drive in root aggregate and rebuild with newly arrived 960GB drive, but unnecessary hassle in my opinion.

LikeLike

OK Yes – Thanks. Sure it makes sense because you drew it up with MetroCluster in mind. If there was no need for MetroCluster then you could use RAID-TEC and Advanced Data Partition Enhancements – correct ? , and stick with one size of SSD for the whole environment ?

LikeLike

With current implementation of Metrocluster you cannot use ADP, because of ATTO bridges sitting in between Ontap and disk drives. For smaller non-metro setups I would use uniform drive size and use ADP, which is now supported also with FAS8200, FAS9000. There is however limit how many drives can be used with ADP and sometimes it makes sense to use dedicated root aggregate drives and mixed shelves also with non-metro setups.

There are rumors about new iscsi-based Metrocluster. With this one there is no need for Atto bridges / dedicated back-end SAN / dedicated root aggregate drives, making it much more cost effective especially with smaller capacity metroclusters. Maybe already with Ontap 9.3, I haven’t had time to get familiar with this topic, too busy with my new position and getting things rolling 🙂

LikeLike